%reload_ext autoreload

%autoreload 2

%matplotlib inline

Tensors and Matrix multiplication¶

Basics of Pytorch Tensors and optimizing matrix multiplication

Pytorch Tensors¶

The Pytorch tensor is our basic unit. Everything we build here will be used to manipulate them in some way.

Its a container for data with lots of special properties and methods.

#export

import matplotlib.pyplot as plt

import matplotlib as mpl

mpl.rcParams['image.cmap'] = 'gray'

import torch

from torch import tensor

import graphviz

import os

import pandas as pd

import operator

from fastai import datasets

import gzip

import pickle

os.environ["PATH"] += os.pathsep + 'C:/Program Files (x86)/Graphviz/bin/'

a = tensor([[1,2,3],[4,5,6]])

b = tensor([[2,3],[2,3],[2,3]])

a.dtype

a

In-place and Out of place¶

x = torch.randn((3,3))

y = torch.randn((3,3))

Many of the builtin tensor operations can be used either "in-place" or "out-of-place".

For instance, add can be used "out-of-place" and will return the result of x and y

x

x.add(y)

But x has not been altered.

x

If on the other hand we use add_, y is added to x "in-place" and now x is the result of y and x

x.add_(y)

x

Elementwise Operations¶

Elementwise Addition and Subtraction

In the above example add is an elementwise operation: every element of x is added to its corresponding row and column unit in y.

$$ y + x = \begin{bmatrix} x_1 & x_2 & x_3 \\ x_4 & x_5 & x_6 \\ x_7 & x_8 & x_9 \end{bmatrix} + \begin{bmatrix} y_1 & y_2 & y_3 \\ y_4 & y_5 & y_6 \\ y_7 & y_8 & y_9 \end{bmatrix} = \begin{bmatrix} x_1 + y_1 & x_2 + y_2 & x_3 + y_3 \\ x_4 + y_4 & x_5 + y_5 & x_6 + y_6 \\ x_7 + y_7 & x_8 + y_8 & x_9 + y_9 \end{bmatrix} $$

The two tensors must be the same shape and the resulting matrix will be the same shape as well

x + y

x - y

Elementwise Product

$$ \vec{a} \circ \vec{b} = \begin{bmatrix} a_1 \\ a_2 \\ a_3 \\ a_4 \end{bmatrix} \circ \begin{bmatrix} b_1 \\ b_2 \\ b_3 \\ b_4 \end{bmatrix} = \begin{bmatrix} a_1 \times b_1 \\ a_2 \times b_2 \\ a_3 \times b_3 \\ a_4 \times b_4 \end{bmatrix} $$

a1 = torch.randn((3))

b1 = torch.randn((3))

a1,b1

a1 * b1

And again we can do this in place with the method mul_

a1.mul_(b1); a1

Broadcasting¶

The term broadcasting describes how arrays with different shapes are treated during arithmetic operations. The term broadcasting was first used by Numpy.

a

a > 0

Even though a is a 2x3 matrix the zero is broadcasted and then compared with each element in a

This happens whenever we try to do an operation between a matrix and a scalar:

a + 1

a * 2

Broadcasting also works between a vector and matrix:

a[0] * a

Tensor Rank and Shape¶

And if we reshape a to make it a column vector with three rows we can then broadcast it over b

a[0].unsqueeze(-1), b

(a[0].unsqueeze(-1) * b)

CUDA Tensors¶

The most important aspect of Tensors is that Pytorch has designed them to run on the GPU which results in significant speed.

Normally when we create a tensor the CPU will handle any operations done it.

b = torch.full((10,), 3)

print(b)

b.device

But if we specify the device we can run it on the GPU:

if torch.cuda.is_available():

a = torch.full((10,), 3, device=torch.device("cuda"))

print(a)

Data¶

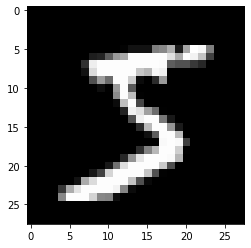

First, we need some sort of data. We'll be using the MNIST dataset . A classic.

The goal of the MNIST dataset is to predict the digit based on a handwritten grayscale image. Its a multiclass or multinomial classification problem.

Each image is 28 x 28 pixels. And they only have 1 channel.

#export

def test(a, b, comp, cname=None):

if cname is None: cname = comp.__name__

assert comp(a,b), f"{cname}: \n{a} \b{b}"

def test_eq(a,b):

test(a,b, operator.eq, "==")

TEST = 'test'

test_eq(TEST, 'test')

MNIST_URL = 'http://deeplearning.net/data/mnist/mnist.pkl'

path = datasets.download_data(MNIST_URL, ext='.gz')

The Fastai datasets library makes it simple to download and save it locally.

with gzip.open(path, 'rb') as f:

((x_train, y_train), (x_valid, y_valid), _) = pickle.load(f, encoding='latin-1')

The training and validation sets are Numpy arrays.

type(x_train)

The training set has 50,000 rows (examples) and each row has 784 features or 28x28 pixels

x_train.shape

A single row is a vector of values between 0 and 1 representing individual grayscale pixel values.

x_train[1, 450:500]

We can represent it by throwing a row into a pandas dataframe and using background gradients to differientate the pixel values.

n_img = x_train[1].reshape(28,28)

df = pd.DataFrame(n_img[4:26,5:26])

df.style.set_properties(**{'font-size':'6pt'}).background_gradient('Greys')

For Pytorch its necessary to convert the Numpy arrays into Tensors.

x_train, y_train, x_valid, y_valid = map(tensor, (x_train, y_train, x_valid, y_valid))

x_train[0][:20]

r,c = x_train.shape

Each image has a corresponding y value - an integer between 0 and 9.

y_train

y_train.shape, y_train.min(), y_train.max()

assert r==y_train.shape[0]==50000

test_eq(c,28*28)

test_eq(y_train.min(),0)

test_eq(y_train.max(),9)

We can view the first training example by reshaping it into a 28x28 matrix and using plt.imshow

img = x_train[0].view(28,28)

img.view(28,28).type()

plt.imshow(img)

Matrix Multiplication¶

The backbone of deep learning is matrix multiplication.

A matrix multiplication is a linear combination of the rows of some matrix, $X$ here, and the columns of a second matrix, $W$.

Its "linear" because we are only multplying and adding - not exponents or anything fancy.

$$ \begin{bmatrix} x_1 & x_2 & x_3 \\ x_4 & x_5 & x_6 \\ x_7 & x_8 & x_9 \end{bmatrix} \cdot \begin{bmatrix} w_1 & w_2 \\ w_3 & w_4 \\ w_5 & w_6\end{bmatrix} + \begin{bmatrix} b_1 \\ b_2 \\ b_3 \end{bmatrix} = \begin{bmatrix} a_1 & a_2 \\a_3 & a_4 \\ a_5 & a_6\end{bmatrix} $$

The $b$ vector is the bias. We'll discuss that at a later point.

For now its enough to imagine this fancy "neural network" being a box that takes inputs does some matrix multplication on them and outputs a prediction.

#export

def gv(s): return graphviz.Source('digraph G{ rankdir="LR"' + s + '; }')

gv('''neural_network[shape=box3d width=2 height=0.9]

input->neural_network->prediction''')

Matrix Multiplication v1¶

Now we'll start by writing a matrix multiplication function from scratch in pure Python.

def matmul(a,b):

"""Basic Matrix Multiplication in pure python"""

ar,ac = a.shape # num of rows x num of cols

br,bc = b.shape

assert ac==br # assert that there are the same number of columns in a as rows in b

c = torch.zeros(ar,bc) # initialize empty matrix for result

for i in range(ar):

for j in range(bc):

for k in range(ac):

c[i,j] += a[i,k] * b[k,j]

return c

We'll test that it works on a toy example:

$$ \begin{bmatrix} 1 & 2 & 3 \\ 4 &5 &6\end{bmatrix} \cdot \begin{bmatrix} 2 & 3 \\2 & 3\\2 & 3\end{bmatrix} = \begin{bmatrix} 12 & 18\\30 & 45\end{bmatrix} $$

a = tensor([[1,2,3],[4,5,6]])

b = tensor([[2,3],[2,3], [2,3]])

matmul(a,b)

Okay, it works. But since this is such a core operation of deep learning, repeatedly used in every neural network often with millions of parameters, we need it to be fast - very fast.

Let's make some matrices of random numbers as weights and use a few of our MNIST examples.

weights = torch.randn(784, 10)

bias = torch.zeros(10)

weights, bias

m1 = x_valid[:5]

m2 = weights

m1.shape, m2.shape

We'll use the builtin magic command %time:

"The CPU and wall clock times are printed, and the value of the expression (if any) is returned."

%time t1 = matmul(m1,m2)

For this rather small matrix multplication the pure python implementation took roughly 1 second.

We'll need to speed this up significantly.

t1.shape # output shape

Matrix Multiplication v2: Elementwise¶

The most obvious tweak we can make to the v1 is instead of multiplying and adding each element we can multiply rows and columns elementwise and then sum the results.

def matmul(a,b):

"""Elmentwise matrix multiplication in pure python"""

ar,ac = a.shape # num of rows x num of cols

br,bc = b.shape

assert ac==br # assert that there are the same number of columns in a as rows in b

c = torch.zeros(ar,bc) # initialize empty matrix for result

for i in range(ar):

for k in range(bc):

c[i,k] = (a[i,:] * b[:,k]).sum()

return c

matmul(a,b)

Again it works now let's see if how much that improved the speed.

%timeit -n 10 _= matmul(m1,m2)

795 / 1.21

Wow. Simply utliziing elementwise multiplication and Pytorch sum improved the speed by 600x

Let's create a quick test make sure our answers are (nearly) identical.

#export

def near(a,b):

"""Test if two tensors are nearly identical"""

return torch.allclose(a,b, rtol=1e-03, atol=1e-05)

def test_near(a,b):

test(a,b, near)

test_near(matmul(m1, m2), t1)

Matrix Multiplication v3: Broadcasting¶

Another way is to broadcast a row of a over b.

If we reshape each row of a to be a column vector we get:

a[0].unsqueeze(-1)

Now that can be broadcast over b and we'll do an elementwise multiplication:

a[0].unsqueeze(-1) * b

We can also use None to reshape a into a column vector.

a[0, ..., None] * b

We just need to sum over the columns:

(a[0].unsqueeze(-1) * b).sum(dim=0)

And that gives us our first row of the product of a and b.

We just need to iterate over each row of a and do this broadcast/elementwise operation.

def matmul(a,b):

ar,ac = a.shape

br,bc = b.shape

assert ac==br

c = torch.zeros((ar, bc))

for i in range(ar):

c[i] = (a[i].unsqueeze(-1) * b).sum(dim=0)

return c

matmul(a,b)

%timeit -n 10 _=matmul(m1, m2)

By eliminating one of the loops and relying on broadcasting it's about 4 times faster than just doing elementwise multiplication.

(795*1000) / 263

We're about 3000x faster than python now.

test_near(t1, matmul(m1, m2))

Matrix Multiplication v4: Einstein Summation¶

Einstein summation (einsum) is a compact representation for combining products and sums in a general way. From the numpy docs:

"The subscripts string is a comma-separated list of subscript labels, where each label refers to a dimension of the corresponding operand. Whenever a label is repeated it is summed, so np.einsum('i,i', a, b) is equivalent to np.inner(a,b). If a label appears only once, it is not summed, so np.einsum('i', a) produces a view of a with no changes."

def matmul(a,b):

return torch.einsum('ik,kj->ij', a, b)

%timeit -n 10 _=matmul(m1, m2)

It's even faster than v3... but the fundamentals are Pytorch.

Matrix Multiplication: Pytorch¶

Now we'll use Pytorch's matrix multiplication function.

Pytorch pushes the computation to a BLAS library called MKL (Math Kernel Library)

torch.__config__.show()

And its fast....

%timeit -n 10 t2 = m1.matmul(m2)

Compared to the triple loop pure python implementation Pytorch is 44,000 times faster!

(795*1000) /18

t2 = m1@m2

test_near(t1, t2)

'@'¶

The @ operater allows us to do vector-matrix operations, as well as matrix multiplications.

a = torch.randint(1,9, (3,))

b = torch.randint(1,9, (3,3)); a,b

a@b

b@a

!python notebook2script.py 01_tensors_matmul.ipynb